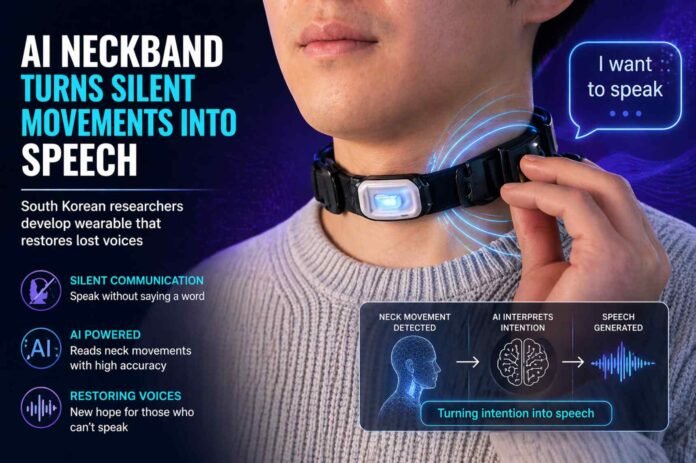

Researchers at South Korea’s Pohang University of Science and Technology have unveiled a prototype wearable that reads subtle movements in the neck and converts them into spoken words. Instead of relying on audible speech, the system uses a small camera embedded in a lightweight neckband to capture minute muscle and skin shifts that occur when a person attempts to speak.

These movements are mapped and interpreted by an AI model trained to recognize patterns linked to specific words. The result is then passed through a speech synthesis engine, effectively “speaking” on behalf of the wearer. The concept may sound futuristic, but its goal is practical: restoring communication for people who have lost their ability to speak.

What makes this approach stand out is its simplicity. Unlike brain signal based systems that depend on bulky equipment, this solution focuses on observable physical cues. That makes it more portable and potentially more accessible for real world use.

Designed to restore lost voices

The team behind the project is particularly focused on medical applications. People who have undergone procedures such as laryngectomy often lose the ability to produce sound, leaving them dependent on alternative communication tools that can feel slow or limiting.

This neckband offers a different path. By translating the natural intention to speak into audible output, it could help users communicate more fluidly. The speech synthesis system can also be trained to mimic the user’s original voice, adding a personal layer that many assistive technologies lack.

Beyond healthcare, the researchers see broader possibilities. The same system could allow workers in loud industrial environments to communicate without shouting over machinery. It might also enable discreet, silent conversations in quiet settings like libraries or meetings.

Early results show promise, with limits

At its current stage, the technology is still in development. Tests show an accuracy rate of 85.8 percent, but this figure applies to a limited vocabulary of just 26 predefined words based on the NATO phonetic alphabet. That means the system can reliably recognize structured, known inputs, but it is not yet capable of handling natural, free flowing speech.

Performance also drops significantly when the wearer is in motion, falling to around 39.7 percent accuracy. This highlights one of the key challenges ahead: making the system robust enough to function in dynamic, everyday environments.

There are encouraging signs, though. The device performs well even in noisy conditions, maintaining accuracy in environments with sound levels as high as 90 decibels. That suggests strong noise filtering capabilities, an advantage over traditional voice recognition systems that often struggle in similar situations.

A step toward quieter, more flexible communication

While still experimental, the neckband represents an intriguing shift in how we think about human communication. Instead of capturing sound or brain signals, it leverages the physical mechanics of speech itself. This middle ground could prove to be more practical than either extreme.

The road ahead involves expanding vocabulary, improving motion handling, and refining accuracy across different users. With more training data and development, the system could evolve into a versatile communication tool.

If successful, it would not just restore voices for those who have lost them. It could also redefine how we interact in environments where speaking out loud is inconvenient or impossible. Silent, AI assisted conversations may not be far off, but for now, this prototype is an early glimpse of what that future might look like.

Follow TechBSB For More Updates