- Google expands Pentagon deal allowing Gemini use for any lawful purpose

- Employees protest, citing ethical concerns over military AI involvement

- Contract limits Google’s control over how the AI is used

- Move reflects broader trend of tech firms entering defense sector

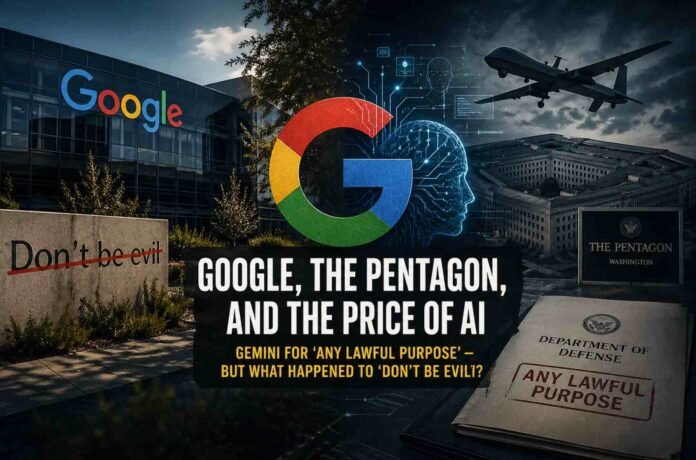

Google’s latest move into deeper collaboration with the US Department of Defense has reignited a familiar debate about the company’s ethics, priorities, and long evolving identity. Once closely associated with the phrase “Don’t Be Evil,” the company now finds itself defending its role in supplying advanced AI tools for government use, including classified environments.

The expanded agreement allows the Pentagon to use Google’s Gemini AI for what is described as “any lawful purpose.” On paper, that phrasing may sound like a guardrail. In practice, it leaves wide room for interpretation, particularly in a regulatory landscape where laws can shift quickly in response to political and technological pressures.

At the same time, Google has stepped away from a separate Pentagon initiative involving voice controlled autonomous drone swarms. Officially, the company cited resource constraints. Reports suggest internal ethical concerns played a significant role in that decision, hinting that not all boundaries have disappeared inside the company.

“Lawful purpose” and the limits of oversight

The phrase “any lawful purpose” sits at the center of the controversy. Critics argue that legality does not always equate to ethical clarity. History offers several examples where surveillance and data access laws evolved to permit practices that would have once been considered unacceptable.

This concern is amplified by another clause in the agreement stating that Google does not retain the right to veto government operational decisions, as long as they are lawful. In effect, once the technology is deployed, the direction of its use rests almost entirely with the government.

For many observers, this creates a troubling dynamic. AI systems like Gemini are not passive tools. They can be used to process intelligence, enhance surveillance capabilities, and potentially support military operations in ways that were previously impossible or inefficient.

Internal tensions resurface

Inside Google, the backlash has been swift and familiar. Hundreds of employees have signed letters urging leadership to reconsider the company’s involvement in military AI projects. This echoes past protests, most notably the 2018 backlash against Project Maven, which led Google to withdraw from analyzing drone footage for the Pentagon.

Those earlier protests also contributed to the creation of Google’s AI principles, which included commitments to avoid harmful applications such as weapons and surveillance. Over time, however, those principles have been revised, softened, or removed, raising questions about how firmly they were ever embedded in the company’s long term strategy.

Despite internal dissent, Google leadership has taken a firm public stance. Executives argue that supporting national security can be done responsibly and that engagement with governments is both inevitable and necessary. The tone suggests a company that is less willing to step back from lucrative contracts, even when they generate internal friction.

The business of defense and big tech

Financial incentives are difficult to ignore. Defense contracts represent a significant and stable revenue stream, and Google is not alone in pursuing them. Other major tech players have already secured extensive government partnerships in cloud computing, cybersecurity, and AI.

From a competitive standpoint, Google’s expanded Pentagon deal looks less like a radical shift and more like an attempt to keep pace with rivals. The difference lies in perception. Google’s historical branding around ethics and responsibility creates a sharper contrast when it enters spaces traditionally dominated by defense contractors.

Ultimately, the debate is not just about one contract. It reflects a broader tension between innovation, profit, and responsibility. AI is becoming deeply embedded in national security strategies, and the companies building these systems are increasingly part of that equation.

Follow TechBSB For More Updates